Entropy¶

Entropy is the measure of chaos in a system. In computer science, we use the

term entropy when talking about random data as measuring how many bits of

randomness something contains. For example, a lowercase eight-letter password

such as frjnqaip contains less entropy than an alphanumeric eight-character

password that has both upper- and lowercase letters, such as HH8rfTiF,

despite them having the same length. This is because there are more

possibilities of the latter.

Passgen can calculate the entropy of the passphrases it generates. This can

give you a useful estimate of how much computing power would be required to

crack the passphrase, if an adversary knew the exact pattern you were using to

generate it. You can use the --entropy flag to tell passgen to compute

the entropy as it is generating passwords. For example:

$ passgen -e '[a-z]{8}'

entropy: 37.603518 bits

frjnqaip

$ passgen -e '[A-Za-z0-9]{8}'

entropy: 47.633570 bits

Q5cO12sF

In general, the longer a passphrase is, or the more choice it has (more possible letters, longer wordlist), the higher the entropy it, and therefore the more secure it is.

Calculator¶

Given some public data, it is possible to estimate how much time an adversary would need to crack a passphrase of a given entropy, assuming that the adversary knows the pattern used to generate it.

| Name | Value |

|---|---|

| Entropy | |

| Algorithm | |

| Budget | |

| Time |

Recommendation¶

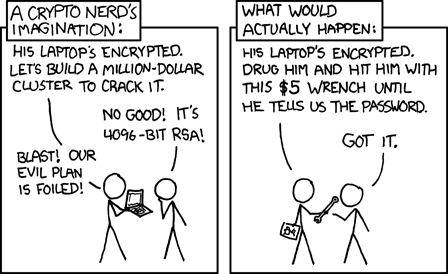

We recommend to use passphrases with at least 100 bits of entropy. Every bit doubles the computational effort needed to crack the passphrase. It is better to err on the side of caution. Also keep in mind that often times, the passphrase is not the weakest link in the chain, but the human is.

Security (XKCD 538)